At Silectis, we deploy Magpie clusters across AWS, Google Cloud, and Azure. But because some of our internal infrastructure resides only on AWS, we need to establish private connections between these environments so that clusters on Google Cloud and Azure can access those private AWS resources. In this post, we’ll walk through the details of how we set up this multi-cloud network using site-to-site VPN connections in Terraform.

MANAGING CLOUD INFRASTRUCTURE WITH TERRAFORM

Terraform is a great way to manage cloud infrastructure, allowing engineers to declaratively state a desired infrastructure configuration in code that can be version controlled. AWS, Google, and Azure all have similar offerings, but they are limited to managing resources within their clouds. Since Terraform supports many different cloud infrastructure providers (as well as other providers), it’s a perfect choice for a multi-cloud architecture.

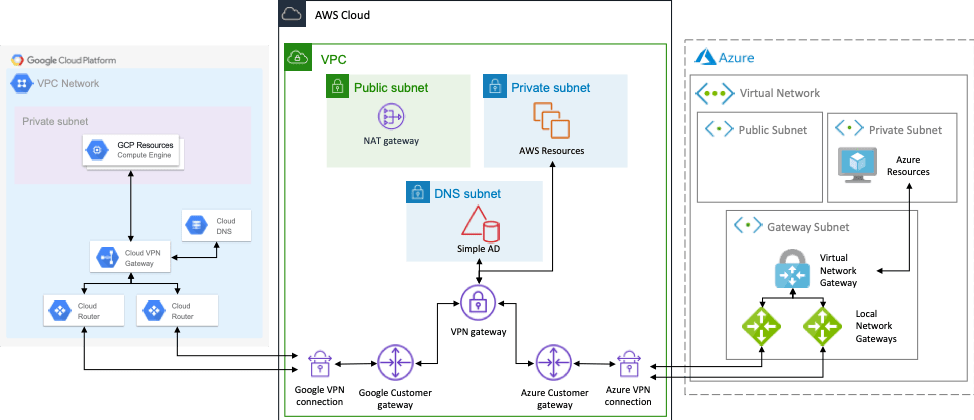

Now is a good time to stop and talk about our desired network architecture. Since AWS is where our shared private resources reside, it will serve as a hub. We can peer other VPCs in AWS with this hub VPC to get access to the private resources, and site-to-site VPNs will connect the other clouds to this AWS hub VPC. Note that each site-to-site VPN connection has two VPN tunnels configured for high availability. AWS will also host DNS that other clouds can access to resolve AWS private hostnames. For this, we’ll use AWS Simple AD. This works for the configuration described here, but for more complicated networks, you may need to run your own DNS. The resulting network looks like this:

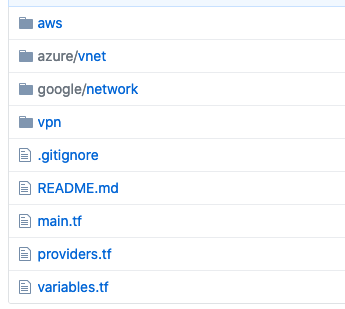

We’ll walk through creating all of this, except for the “resources” in each cloud’s private subnet, using a sample Terraform project hosted here in GitHub. Looking at the Github repository, the root directory has the main Terraform entry point and required variables, as well as a number of modules. There are AWS modules for the directory service and VPC plus Google Cloud and Azure modules for virtual networks. The VPN module contains the infrastructure that connects the 3 networks together.

START BY CONFIGURING THE CSPs IN TERRAFORM

To begin, we need to configure the various providers in Terraform in the providers.tf file. For simplicity, we will assume a shared credentials file or environment variable authentication for AWS, CLI authentication for Azure, and a service account key file for Google Cloud. Since Google Cloud DNS private forwarding is a beta feature in Google Cloud, we also need to configure the google-beta provider.

Next, we’ll create a simple AWS VPC with one public and one private subnet using the included VPC module. This also configures public and private route tables that will be used in the VPN configuration.

Then, we’ll set up the AWS Simple AD service, which we’ll use as a way to share internal DNS records with Azure and Google Cloud. For simplicity here, the module assigns the first two availability zones to the service.

Then, we create the Google Compute Network with a single private subnet. Due to the way load balancing works in Google Cloud, we don’t need to create a public subnet. We also configure a private Cloud DNS zone with conditional forwarding to the Simple AD service. This will allow Google Compute instances to resolve internal AWS hostnames. Since this is one-way, AWS instances will not be able to resolve internal Google Cloud hostnames, but Google Cloud DNS supports inbound DNS forwarding if this is a requirement.

The Azure Virtual Network is created next with one public and one private subnet, which uses the Simple AD service directly for its DNS servers. While Azure offers a Private DNS, it doesn’t natively support conditional forwarding like Google does, so we need to specify the Simple AD servers as the DNS for the network directly.

Mind if we keep in touch?

We’ll occasionally share data engineering resources and best practices, Silectis news and events, and product updates. Just the good stuff — we promise.

CONFIGURING SITE-TO-SITE VPNs

Now that we have each network set up, we can start configuring the site-to-site VPNs. We start in AWS by creating a VPN gateway for the VPC, making sure that VPN routes are propagated from the gateway to the VPC route tables. Then we create two customer gateways with VPN connections, one for Google and one for Azure. For Google, we can use Border Gateway Protocol (BGP) to automatically share routes across the VPN, but for Azure, we need to manually configure the routes. While both AWS and Azure support BGP, the way it needs to be configured on either side of the connection is not currently compatible.

Configuring the Google side of the VPN connection requires creating a VPN gateway along with two VPN tunnels to AWS and two Cloud Routers. The tunnels send traffic between the networks and the routers manage the traffic and share the available routes using BGP.

Configuring the Azure side of the VPN is similar to Google. We create a Virtual Network Gateway along with two Local Network Gateways and two corresponding connections (tunnels).

Now we can bring this all together in a main Terraform file that instantiates all of the modules and links them together.

To build the infrastructure, we need to supply the appropriate variable values specified in variables.tf, run terraform init to download the providers and initialize the local modules, and then run terraform apply and confirm the changes. Note that it may take 10-20 minutes after completion for the VPN connections to become active. To test out the connection, deploy an EC2 instance in the AWS private subnet that hosts a web server on a port above 1024 and curl it from an Google compute instance or Azure VM.

Learn more about our cloud-agnostic, multi-cloud enabled Magpie data engineering platform by scheduling a demo. If you’re interested in working on problems like these, apply for one of our open positions on our Careers page.

Jon Lounsbury is the VP of Engineering at Silectis.

You can find him on GitHub and LinkedIn.